The True Impact of AI in Process Engineering: A Technical and Operational Perspective

The True Impact of AI in Process Engineering: A Technical and Operational Perspective

AI in Process Engineering: From Hype to Measurable Operations Value

AI has moved from pilots to board‑level agendas across refining, petrochemicals,energy, and advanced manufacturing. The core question for leaders is no longer if AI matters, but where it creates repeatable value and how to scale it beyond pilots.

1) The Economic Case—Real, but Execution‑Dependent

- AI’s macro upside is significant, yet conversion to plant P&L depends on data maturity, model design, and operational integration.

- Early adopters report 10–15% throughput gains, 4–5% EBITA improvement, and up to 20% productivity uplift—material in high‑throughput assets (refineries, crackers, concentrators).

- Translation: even low single‑digit improvements can deliver multi‑million‑dollar annual value per site.

Implication: Treat AI as an engineering program with ROI accountability, not a tooling experiment.

2) Adoption vs. Scale—Why Most Value Stalls

- Many plants run single‑unit pilots; few achieve enterprise scale.

- Common blockers:

- Historian gaps/noisy instruments (low signal‑to‑noise, uncalibrated sensors)

- Black‑box models lacking operator trust

- No link to DCS/APC, MES, CMMS/ERP (insights don’t change setpoints or work orders)

- Weak governance and cyber posture (models can’t be promoted or sustained)

Principle: If a model doesn’t change a control variable or a work order, it won’t change outcomes.

3) Where AI Delivers Tangible Value Today

3.1 Predictive Maintenance & Reliability

- Use cases: bearing degradation, pump cavitation, heat‑exchanger fouling, compressor surge risk.

- Methods: supervised/unsupervised anomaly detection, RUL estimation, hybrid digital twins.

- Operating results: 5–9% prediction error on key variables; fewer unplanned outages; optimized PM windows; parts/labor rationalization.

- System pattern: Sensors → Historian → Feature store → Models (RUL/anomaly) → CMMS work orders → Feedback loop to models.

Why it works: Clear failure modes + labeled events + maintenance outcomes = closed reliability loop.

3.2 Process Optimization & Advanced Control

- Targets: energy intensity (MJ/ton), product yield/grade, off‑spec reduction, cycle time.

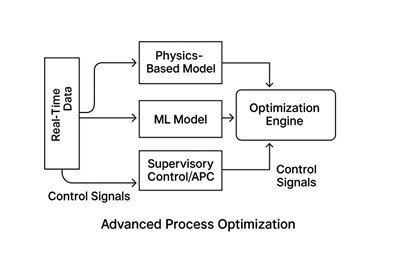

- Methods: multivariate ML, hybrid (first‑principles + ML), soft sensors, surrogate models; supervisory optimization feeding APC.

- Results: consistent 1–3% margin uplift in mature units; >10% uplift in variable ore/feed cases.

- Closed‑loop path: AI recommendations → operator advisory → APC constraints/setpoints → sustained performance.

Why it works: Captures nonlinear interactions and dynamics that exceed linear/APC‑only methods.

3.3 Digital Twins as Decision Support

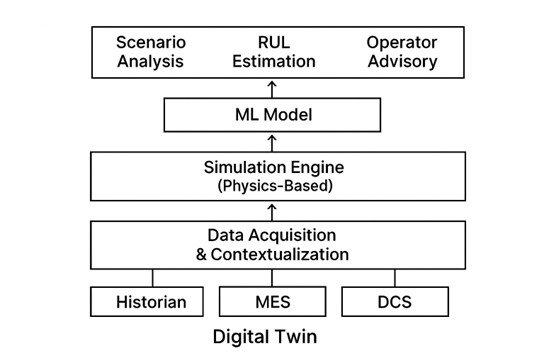

- Architecture: real‑time data + physics engine + ML layer + explainable AI + scenario/RUL modules.

- Outcomes: scenario planning for feed/slate changes, energy/cost trade‑offs, maintenance timing, and safety margins.

Shift: From 3D visualization to operational intelligence guiding daily decisions.

4) Persistent Myths to Retire

- “AI automatically delivers ROI.”

ROI requires contextualized data, feature engineering, and workflow wiring to DCS/MES/CMMS. - “Generative AI will replace engineers.”

Safety‑critical operations demand domain judgment; AI augments with speed and pattern detection. - “Scaling is mostly technical.”

The limiting factors are governance, change management, cybersecurity, and total cost of ownership (TCO).

5) The Operating Model of High‑Performing Programs

A. Data Foundations

- Calibrated sensors, synchronized timestamps, unit‑consistent tags, event logs, downtime coding.

- Asset hierarchy and context (P&IDs, tag‑to‑equipment mapping, material balance integrity).

B. Hybrid Modeling Approach

- Physics + ML (e.g., soft constraints from first principles; ML for residuals and nonlinearities).

- Model telemetry and drift detection (population stability index, residual monitoring, retrain triggers).

C. Deep Integration

- Northbound: MES/ERP for demand, cost, and schedule.

- Southbound: DCS/APC for setpoint/constraint changes; CMMS for automated work orders.

- Human‑in‑the‑loop: operator advisories with confidence bounds and rationale (XAI).

D. Governance & Lifecycle Control

- MLOps pipelines, model/version registries, approvals, rollback; cybersecurity hardening.

- Change management, SOP updates, role definitions (process, maintenance, OT/IT).

6) Practical Playbook: From Pilot to Plant‑Wide

- Pick line‑of‑sight value cases (PdM on critical assets; unit‑level energy/yield optimization).

- Stand up the data layer (historian quality, feature store, lineage, master data).

- Build hybrid models with interpretable features and guardrails aligned to safety limits.

- Wire into operations (APC/DCS setpoint pathways; CMMS work order generation; operator UX).

- Instrument ROI (baseline vs. post‑deployment KPIs; counterfactuals; confidence intervals).

- Operationalize MLOps (monitor drift, schedule retrains, track economic value).

- Scale by patterns (reference architectures, reusable features, model templates, SOPs).

7) Maturity Curve—Where the Industry Is Now

- Experimentation: PoCs, dashboards, limited data hygiene.

- Operationalization (Today): PdM, optimization, digital twins; growing APC integration.

- Autonomous Operations (Emerging): adaptive, self‑optimizing loops with formal safety envelopes.

Conclusion—From Ambition to Outcomes

AI in process industries is proven in predictive maintenance, process optimization, and digital twins. What’s uncommon is enterprise‑scale impact. The differentiators are data rigor, hybrid modeling, deep control‑system integration, and governed lifecycle management.

The strategic question:

How will we deploy AI so that it persistently changes setpoints, schedules, and work orders—and therefore P&L—at scale?

Organizations that operationalize AI as an engineering discipline will lead the next era of intelligent, resilient, high‑performance operations.